The AI landscape is evolving faster than ever. Frontier models like Claude Opus 4.6, GPT-5, and other agentic systems can now reason about code, synthesize complex workflows, and assist with multimodal tasks that just a few years ago seemed impossible.

At the same time, robotics — or Physical AI — is entering an era of unprecedented capability. Robots can perform sophisticated tasks with remarkable autonomy, bringing us closer to truly intelligent systems.

But as anyone working in robotics knows, these systems still fail… a lot, and making them reliable is extremely challenging. At Amazon Robotics, we saw firsthand that building dependable robotic systems ultimately comes down to the debugging loop: how quickly can you find issues, understand what happened, and fix them?

Despite all the AI advances, one of the slowest and least optimized parts of robotics development remains analyzing logs and figuring out what actually happened on a robot.

For most teams, log analysis is painfully manual. Engineers download gigabytes of logs, write custom scripts, export data to a notebook, plot a few signals—and then repeat the process when the next incident happens. As fleets grow and datasets scale, this workflow quickly breaks down.

One of the core ideas behind starting Roboto was simple: what if you could just ask your robotics data questions?

Imagine queries like:

When we first started exploring this idea, right before ChatGPT was released in 2022, it felt like a pipe dream.

In just a few years, the rapid progress in large language models has brought this vision much closer to reality.

Still, robotics data remains uniquely challenging, and turning raw logs into actionable insights is far from trivial.

Robotics data is fundamentally different from the kinds of data most AI models are trained to work with.

Unlike text or tabular datasets, robotics logs are highly complex. They contain multiple modalities: video, LiDAR, IMU data, sensor readings, and algorithm outputs, and are often stored in specialized binary formats such as ROS bags or PX4 ULog files.

Even a log from a single robot session may contain gigabytes of data spread across dozens or hundreds of asynchronous topics, with deeply nested message schemas and multiple sensors.

Understanding what actually happened requires stitching together information from across these different topics.

Even simple questions like “Why did the robot stop?” can require digging through multiple topics, events, and system states.

Modern AI models are incredibly capable. But robotics logs are often tens of gigabytes in size - far too large to feed directly into an LLM. And even if you could, the model still wouldn’t produce meaningful answers.

Robotics logs present several fundamental challenges for language models:

1) The Tokenization Gap

Robotics logs are binary data streams not natural language. Converting a 40GB log file into a text format an LLM can read (such as JSON or CSV) causes the data size to explode, quickly exceeding the context window of even the largest models.

2) High Noise-to-Signal Ratio

Most robotics logs are overwhelmingly routine. The system is typically behaving normally.

The one millisecond-long sensor spike that triggered a failure may be buried within millions of lines of normal values.

3) Lack of System Context

Raw data rarely tells the full story.

Interpreting robotics logs requires understanding the system itself: message schemas, hardware configuration, software modules, and the code that produced the logs. Without this context, even a powerful model cannot reason effectively about what happened.

So we need a better approach.

Turning a general-purpose language model into a robotics expert isn’t about building a system that can read 40 GB of logs. It’s about building a system that knows which 40 KB actually contains the answer.

Today we’re introducing Roboto AI Chat — a conversational interface for your robotics data that works the way a great engineer does: understanding your system, reasoning about context, and surfacing the right data at the right moment.

Under the hood, Roboto AI Chat equips an AI agent with three key ingredients:

Grounded Inputs

Instead of always trying to feed the agent raw data in isolation, we provide structured events derived from deterministic checks.

These checks can be built using Roboto Actions and capture existing domain knowledge - from deterministic rules to anomaly detection and specialized ML models. Combined with platform metadata such as topic statistics and user-defined tags, this helps guide the agent directly to relevant segments of system behavior.

System Context

Like any human engineer, an agent cannot interpret data in a vacuum.

It needs technical context about the system itself: message schemas, hardware mappings, system documentation, and potentially access to source code that explains how specific log messages are generated.

Expert Tools

Finally, the agent is equipped with specialized tools built on the Roboto SDK to retrieve exactly the data it needs. These tools allow the model to zoom in on relevant time windows, inspect specific topics, and verify hypotheses without ever processing gigabytes of raw data.

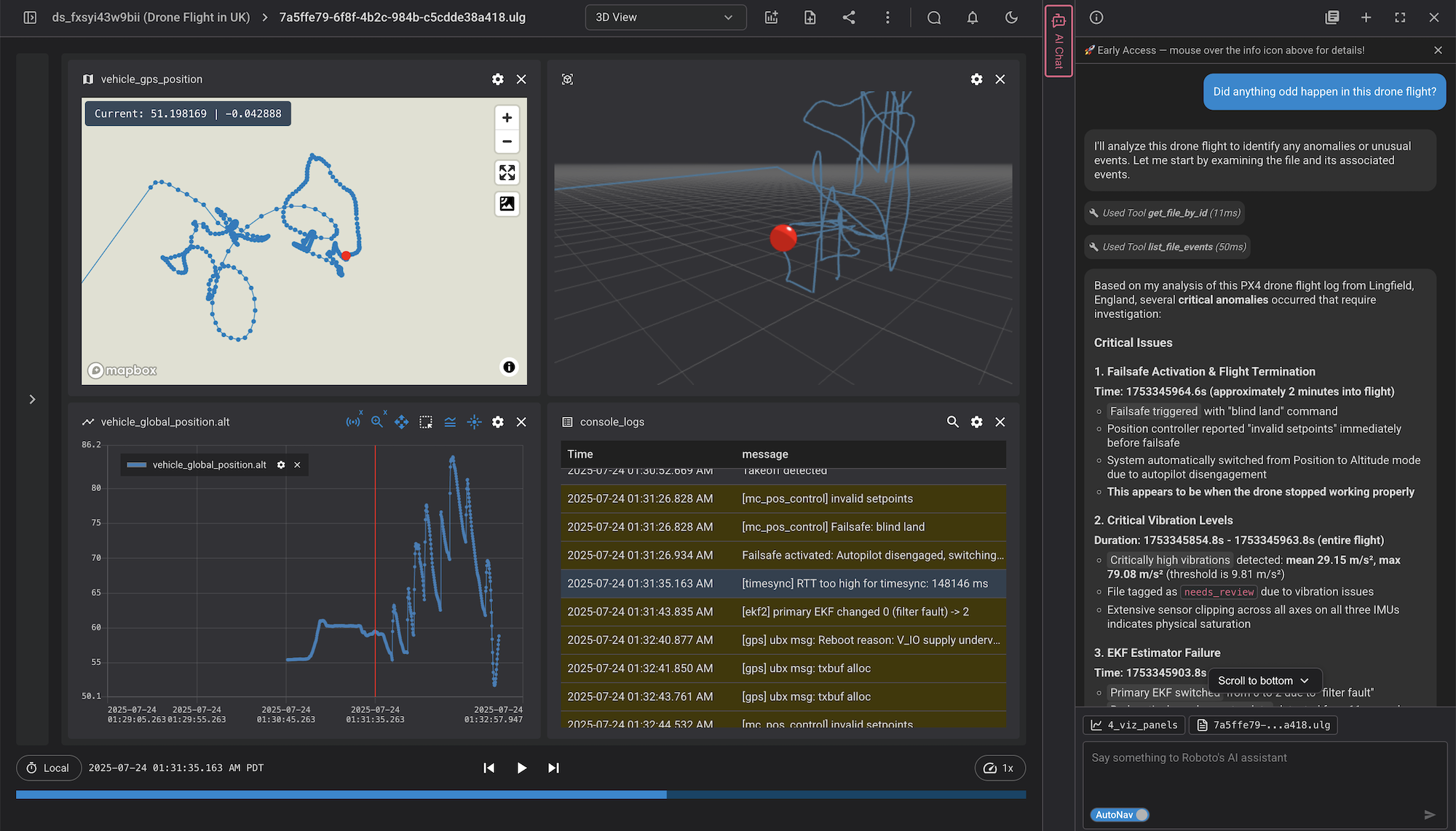

Roboto AI Chat reasons like an engineer, guiding you to the insights that matter as we’ll see in the next example with a PX4 drone flight.

See the short video below where Benji debugs a drone flight using the AI Chat interface:

We believe agents will fundamentally change how organizations monitor and debug their robots.

However, most data tools are built for human navigation: browse a file, plot a signal, scrub a timeline. That's the wrong abstraction for an agent that needs to programmatically ask "what happened at t=142s across these six topics?"

Roboto is built around a data model and SDK designed for this — efficient querying, precise time-slicing, and topic-level retrieval across large binary files. The quality of an agent's answers is only as good as the data infrastructure underneath it. We built that layer first, and AI Chat is what becomes possible on top of it.

Roboto AI Chat is actively being used and tested with a set of customers and we’ve been really excited about the results they’ve shared so far.

Over the coming months, we’ll share more about how the system works under the hood, including the agent architecture, evaluation framework, and the tools that power it.

If you’re working with robotics data and are interested in trying it out, get in touch.